The White House is considering a plan to review powerful artificial intelligence models before they are released, according to reports published on May 5, 2026.

The proposal would mark a major shift in US AI policy. It could give the federal government a direct role in assessing advanced models before they reach the public or are deployed across government systems.

The discussions reportedly center on a new executive order. It could create an AI working group involving government officials, national security agencies, and technology executives.

NYT: The White House is weighing a plan to vet new AI models before release, a sharp shift from Trump’s earlier hands-off approach, after Anthropic’s Mythos raised alarms over cyberattack risk and pushed officials to seek first access to powerful models. pic.twitter.com/kmIO9uyd7u

— Wall St Engine (@wallstengine) May 4, 2026

The immediate concern is security. Reports say officials are worried that frontier AI models could help users discover software flaws, write harmful code, or accelerate cyberattacks.

One model reportedly under scrutiny is Anthropic’s Claude Mythos. Cybersecurity experts have warned that its coding ability could make complex attacks easier to plan and execute.

However, the White House has not confirmed a final policy. Officials have described talk of a new executive order as speculation, saying any announcement would come directly from President Donald Trump.

The main risk is overreach. A pre-release review process could slow AI development, create political pressure over model launches, and give Washington unusual influence over private technology.

Anthropic said Mythos was too dangerous to release. Then four random guys in a Discord gained access on day one by guessing the URL…

This is pretty insane:

→ Group in a private Discord guessed the endpoint from Anthropic’s naming conventions

→ They figured out the… https://t.co/HUxd8pwqEH— Josh Kale (@JoshKale) April 22, 2026

At the same time, the security argument is not weak. If a model can meaningfully improve cyberattack capability, the government has a clear reason to examine how it is released and who can access it.

The key question is scope. A narrow review for national security and government deployment would be easier to justify. A broader approval system for all major AI models would be more controversial.

There is a recent comparison in crypto. Trump created a digital asset working group in January 2025 to coordinate policy across agencies. That group later helped shape the administration’s crypto agenda, including stablecoin rules and agency-level action.

That history matters. Trump’s working groups can start as advisory bodies, then become policy engines. If the AI plan moves forward, it may become the first serious test of how far his administration is willing to control frontier AI before release.

The post President Trump Could Vet AI Models Before Public Release appeared first on BeInCrypto.

We prompted Sam Altman new ChatGPT AI version to predicts the next major moves for Bitcoin, Ethereum, and XRP, and what came back was a

MOEX’s expanded crypto indices could enhance market transparency and attract institutional investors, potentially boosting Russia’s crypto sector. The post Moscow Exchange to add XRP, BNB,

Heightened U.S.-Iran tensions could disrupt oil supply routes, impacting global markets, while cryptocurrency remains largely unaffected. The post US Treasury targets Iranian exchange houses amid

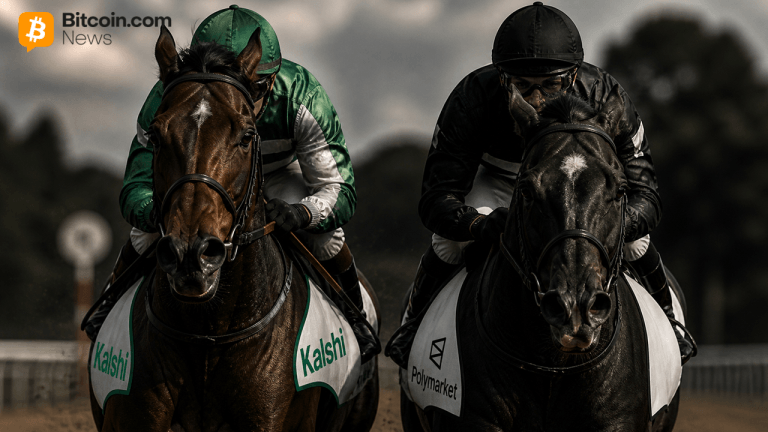

The prediction market sector posted $8.6 billion in taker volume during April 2026, with Kalshi overtaking Polymarket to claim the top spot, according to onchain

Blockchain Bodega is here and you know the vibes. We’re at the intersection of hip-hop culture and web3, exploring this new digital frontier. Who better to guide you through these uncharted lanes than Ock Chain – The Bitcoin Boss and the Consensus Corner Boys?

Ock Chain doesn’t just cook up random details about cryptocurrency; he lives it. Ock’s been deep in the game since Bitcoin was just a baby.

And let’s not forget about the Consensus Corner Boys. They’re our skilled crew of blockchain enthusiasts. They’re here to educate, entertain, and enlighten.

Welcome to Blockchain Bodega!

Copyright © 2023 Blockchain Bodega. All rights reserved.